Problem:

No orchestrators go into active mode.

Expected outcome:

Orchestrators go into active mode.

Foreman and Proxy versions:

[root@10-222-206-158 ~]# yum list installed | grep rubygem-foreman

rubygem-foreman-tasks.noarch 11.0.0-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_kubevirt.noarch 0.4.1-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_maintain.noarch 1:1.10.3-1.el9 @foreman

rubygem-foreman_puppet.noarch 9.0.0-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_remote_execution.noarch 16.0.3-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_salt.noarch 17.0.2-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_statistics.noarch 2.1.0-3.fm3_11.el9 @foreman-plugins

rubygem-foreman_templates.noarch 10.0.8-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_vault.noarch 3.0.0-1.fm3_15.el9 @foreman-plugins

rubygem-foreman_webhooks.noarch 4.0.1-1.fm3_15.el9 @foreman-plugins

[root@10-222-206-158 ~]#

[root@10-222-206-158 ~]#

[root@10-222-206-158 ~]# yum list installed | grep dyn

dynflow-utils.x86_64 1.6.3-1.el9 @foreman

foreman-dynflow-sidekiq.noarch 3.15.0-1.el9 @foreman

rubygem-dynflow.noarch 1.9.1-1.el9 @foreman

Distribution and version:

Alma 9

Other relevant data:

I cannot get our orchestrators to become active. We have 2 foreman servers, using redis broker and psql backend. Even if I completely purge postgres and redis of their knowledge of any ExecutorLocks, it still fails. I have been working on this for over 9 hours now, and cannot get either orchestrator to get a reliable lock. Purging, Flushing Redis DB, server restarts, Ive literally tried everything.

Current state:

[root@10-222-206-152 ~]# PGPASSWORD=“$PGPASS” psql -h “$PGHOST” -U “$PGUSER” -d “$PGDB” -c “SELECT class, owner_id, COUNT(*)FROM dynflow_coordinator_recordsWHERE class IN (‘Dynflow::Coordinator::ExecutorWorld’,‘Dynflow::Coordinator::ClientWorld’,‘Dynflow::Coordinator::DelayedExecutorLock’,‘Dynflow::Coordinator::ExecutionInhibitionLock’)GROUP BY class, owner_idORDER BY class, owner_id;”class | owner_id | count-------------------------------------------±-------------------------------------------±------Dynflow::Coordinator::ClientWorld | | 3Dynflow::Coordinator::DelayedExecutorLock | world:f318bd94-f5d5-425f-bbc7-9bef17d9f3cd | 1Dynflow::Coordinator::ExecutorWorld | | 1(3 rows)

So in this case psql thinks its: f318bd94-f5d5-425f-bbc7-9bef17d9f3cd

And redis also agrees:

[root@10-222-206-158 ~]# redis-cli -h $REDIS_HOST -p $REDIS_PORT -n $REDIS_DB GET dynflow_orchestrator_uuid“f318bd94-f5d5-425f-bbc7-9bef17d9f3cd”

However, regardless of this, neither orchestrator thinks its active:

[root@10-222-206-152 ~]# systemctl status dynflow-sidekiq@orchestrator.service

● dynflow-sidekiq@orchestrator.service - Foreman jobs daemon - orchestrator on sidekiq

Loaded: loaded (/usr/lib/systemd/system/dynflow-sidekiq@.service; enabled; preset: disabled)

Drop-In: /etc/systemd/system/dynflow-sidekiq@.service.d

└─override.conf

Active: active (running) since Mon 2025-11-10 20:17:40 UTC; 8min ago

Docs: https://theforeman.org

Main PID: 12779 (sidekiq)

Status: "orchestrator in passive mode"

Tasks: 10 (limit: 407761)

Memory: 508.6M

CPU: 5min 9.617s

CGroup: /system.slice/system-dynflow\x2dsidekiq.slice/dynflow-sidekiq@orchestrator.service

└─12779 /usr/bin/ruby /usr/bin/sidekiq -e production -r /usr/share/foreman/extras/dynflow-sidekiq.rb -C /etc/foreman/dynflow/orchestrator.yml

Nov 10 20:17:31 10-222-206-152.ssnc-corp.cloud systemd[1]: Starting Foreman jobs daemon - orchestrator on sidekiq...

Nov 10 20:17:31 10-222-206-152.ssnc-corp.cloud dynflow-sidekiq@orchestrator[12779]: 2025-11-10T20:17:31.246Z pid=12779 tid=ajf INFO: Enabling systemd notification integration

Nov 10 20:17:33 10-222-206-152.ssnc-corp.cloud dynflow-sidekiq@orchestrator[12779]: 2025-11-10T20:17:33.198Z pid=12779 tid=ajf INFO: Booting Sidekiq 6.5.12 with Sidekiq::RedisConnection::RedisAdapter options {:url=>"redis://10-222-172-152.ssnc-corp.cloud:6379/0"}

Nov 10 20:17:33 10-222-206-152.ssnc-corp.cloud dynflow-sidekiq@orchestrator[12779]: 2025-11-10T20:17:33.201Z pid=12779 tid=ajf INFO: GitLab reliable fetch activated!

Nov 10 20:17:40 10-222-206-152.ssnc-corp.cloud systemd[1]: Started Foreman jobs daemon - orchestrator on sidekiq.

[root@10-222-206-158 ~]# systemctl status dynflow-sidekiq@orchestrator.service

● dynflow-sidekiq@orchestrator.service - Foreman jobs daemon - orchestrator on sidekiq

Loaded: loaded (/usr/lib/systemd/system/dynflow-sidekiq@.service; enabled; preset: disabled)

Drop-In: /etc/systemd/system/dynflow-sidekiq@.service.d

└─override.conf

Active: active (running) since Mon 2025-11-10 20:22:59 UTC; 4min 7s ago

Docs: https://theforeman.org

Main PID: 3348261 (sidekiq)

Status: "orchestrator in passive mode"

Tasks: 9 (limit: 407762)

Memory: 298.1M

CPU: 9.287s

CGroup: /system.slice/system-dynflow\x2dsidekiq.slice/dynflow-sidekiq@orchestrator.service

└─3348261 /usr/bin/ruby /usr/bin/sidekiq -e production -r /usr/share/foreman/extras/dynflow-sidekiq.rb -C /etc/foreman/dynflow/orchestrator.yml

Nov 10 20:22:49 10-222-206-158.ssnc-corp.cloud systemd[1]: Starting Foreman jobs daemon - orchestrator on sidekiq...

Nov 10 20:22:49 10-222-206-158.ssnc-corp.cloud dynflow-sidekiq@orchestrator[3348261]: 2025-11-10T20:22:49.564Z pid=3348261 tid=1zqtx INFO: Enabling systemd notification integration

Nov 10 20:22:51 10-222-206-158.ssnc-corp.cloud dynflow-sidekiq@orchestrator[3348261]: 2025-11-10T20:22:51.600Z pid=3348261 tid=1zqtx INFO: Booting Sidekiq 6.5.12 with Sidekiq::RedisConnection::RedisAdapter options {:url=>"redis://10-222-172-152.ssnc>

Nov 10 20:22:51 10-222-206-158.ssnc-corp.cloud dynflow-sidekiq@orchestrator[3348261]: 2025-11-10T20:22:51.602Z pid=3348261 tid=1zqtx INFO: GitLab reliable fetch activated!

Nov 10 20:22:59 10-222-206-158.ssnc-corp.cloud systemd[1]: Started Foreman jobs daemon - orchestrator on sidekiq.

Im really not sure what else to do here, Ive tried and tried and tried everything I possibly know.

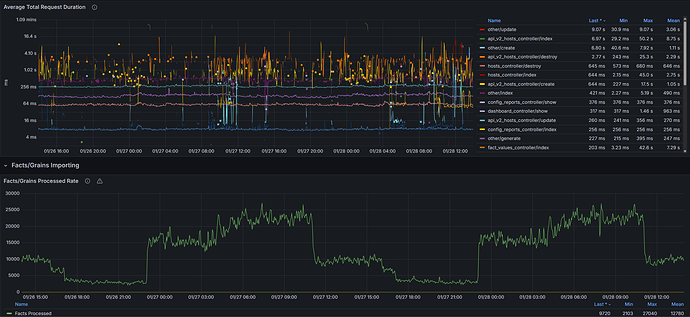

You can see the workers are handling their tasks, but the dynflow_orchestrator/coordinator just keeps growing (and thus no jobs complete)

[root@10-222-206-152 ~]# PGPASSWORD="$PGPASS" psql -h "$PGHOST" -U "$PGUSER" -d "$PGDB" -c "SELECT

(SELECT COUNT(*) FROM dynflow_coordinator_records) AS coordinator_records,

(SELECT COUNT(*) FROM dynflow_delayed_plans) AS delayed_plans,

(SELECT COUNT(*) FROM foreman_tasks_tasks WHERE state='running') AS running_tasks;"

coordinator_records | delayed_plans | running_tasks

---------------------+---------------+---------------

3715 | 0 | 15142

(1 row)

[root@10-222-206-152 ~]# PGPASSWORD="$PGPASS" psql -h "$PGHOST" -U "$PGUSER" -d "$PGDB" -c "SELECT

(SELECT COUNT(*) FROM dynflow_coordinator_records) AS coordinator_records,

(SELECT COUNT(*) FROM dynflow_delayed_plans) AS delayed_plans,

(SELECT COUNT(*) FROM foreman_tasks_tasks WHERE state='running') AS running_tasks;"

coordinator_records | delayed_plans | running_tasks

---------------------+---------------+---------------

3718 | 0 | 15145

(1 row)

Any ideas?