Problem:

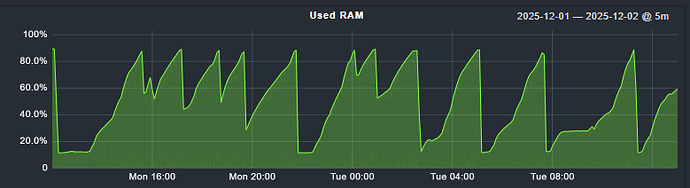

We are seeing regular OOM errors on our Foreman/Katello server. The server has 90GB RAM which I think should be more than enough.

The memory issues cause the system to hang and become unavailable.

Expected outcome:

The system to not consume all the hosts memory and remain stable.

Foreman and Proxy versions:

foreman-3.16.1-1

katello-4.18.1-1

Installed Packages

Foreman and Proxy plugin versions:

Distribution and version:

AlmaLinux release 9.7 (Moss Jungle Cat)

5.14.0-611.5.1.el9_7.x86_64

Other relevant data:

An example of the logs from one of the OOMs events.

Nov 28 09:24:28 uk1-foremansvr1 kernel: Hardware name: oVirt oVirt Node, BIOS 1.11.0-2.el7 04/01/2014

Nov 28 09:24:28 uk1-foremansvr1 kernel: Call Trace:

Nov 28 09:24:28 uk1-foremansvr1 kernel:

Nov 28 09:24:28 uk1-foremansvr1 kernel: dump_stack_lvl+0x34/0x48

Nov 28 09:24:28 uk1-foremansvr1 kernel: dump_header+0x49/0x212

Nov 28 09:24:28 uk1-foremansvr1 kernel: oom_kill_process.cold+0xb/0x10

Nov 28 09:24:28 uk1-foremansvr1 kernel: out_of_memory+0xee/0x2b0

Nov 28 09:24:28 uk1-foremansvr1 kernel: __alloc_pages_slowpath.constprop.0+0x793/0xb10

Nov 28 09:24:28 uk1-foremansvr1 kernel: __alloc_pages+0x21c/0x250

Nov 28 09:24:28 uk1-foremansvr1 kernel: alloc_pages_mpol+0x93/0x1e0

Nov 28 09:24:28 uk1-foremansvr1 kernel: ? filemap_get_entry+0xe3/0x140

Nov 28 09:24:28 uk1-foremansvr1 kernel: folio_alloc+0x12/0x30

Nov 28 09:24:28 uk1-foremansvr1 kernel: __filemap_get_folio+0x189/0x2e0

Nov 28 09:24:28 uk1-foremansvr1 kernel: filemap_fault+0x4d5/0x8b0

Nov 28 09:24:28 uk1-foremansvr1 kernel: __do_fault+0x31/0x150

Nov 28 09:24:28 uk1-foremansvr1 kernel: do_read_fault+0x11e/0x1d0

Nov 28 09:24:28 uk1-foremansvr1 kernel: do_pte_missing+0x157/0x200

Nov 28 09:24:28 uk1-foremansvr1 kernel: __handle_mm_fault+0x2fe/0x650

Nov 28 09:24:28 uk1-foremansvr1 kernel: handle_mm_fault+0xfb/0x270

Nov 28 09:24:28 uk1-foremansvr1 kernel: do_user_addr_fault+0x215/0x620

Nov 28 09:24:28 uk1-foremansvr1 kernel: exc_page_fault+0x61/0x150

Nov 28 09:24:28 uk1-foremansvr1 kernel: asm_exc_page_fault+0x22/0x30

Nov 28 09:24:28 uk1-foremansvr1 kernel: RIP: 0033:0x55d2dfc84e10

Nov 28 09:24:28 uk1-foremansvr1 kernel: Code: Unable to access opcode bytes at 0x55d2dfc84de6.

Nov 28 09:24:28 uk1-foremansvr1 kernel: RSP: 002b:00007ffc1b004438 EFLAGS: 00010246

Nov 28 09:24:28 uk1-foremansvr1 kernel: RAX: 0000000000000006 RBX: 000055d2e11eb350 RCX: 000055d2e24f4300

Nov 28 09:24:28 uk1-foremansvr1 kernel: RDX: 000055d2e280defc RSI: 0000000000000006 RDI: 0000000000000006

Nov 28 09:24:28 uk1-foremansvr1 kernel: RBP: 00007ffc1b004450 R08: 0000000000000081 R09: 000055d2e11eb350

Nov 28 09:24:28 uk1-foremansvr1 kernel: R10: 000055d2e24fc460 R11: 0000000000000246 R12: 000055d2e25deeb8

Nov 28 09:24:28 uk1-foremansvr1 kernel: R13: 00007ffc1b004548 R14: 00007ffc1b004580 R15: 41535c5700000002

Nov 28 09:24:28 uk1-foremansvr1 kernel:

Nov 28 09:24:28 uk1-foremansvr1 kernel: Mem-Info:

Nov 28 09:24:28 uk1-foremansvr1 kernel: active_anon:13506418 inactive_anon:10605264 isolated_anon:0#012 active_file:8733 inactive_file:12660 isolated_file:0#012 unevictable:0 dirty:0 writeback:5426#012 slab _reclaimable:41590 slab_unreclaimable:41023#012 mapped:440981 shmem:942003 pagetables:84312#012 sec_pagetables:0 bounce:0#012 kernel_misc_reclaimable:0#012 free:142454 free_pcp:0 free_cma:0

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 active_anon:54025672kB inactive_anon:42421056kB active_file:35288kB inactive_file:50284kB unevictable:0kB isolated(anon):0kB isolated(file):0kB mapped:1763924k B dirty:0kB writeback:21704kB shmem:3768012kB shmem_thp:0kB shmem_pmdmapped:0kB anon_thp:0kB writeback_tmp:0kB kernel_stack:22512kB pagetables:337248kB sec_pagetables:0kB all_unreclaimable? yes

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 DMA free:11264kB boost:0kB min:4kB low:16kB high:28kB reserved_highatomic:0KB active_anon:0kB inactive_anon:0kB active_file:0kB inactive_file:0kB unevictable:0 kB writepending:0kB present:15992kB managed:15360kB mlocked:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: lowmem_reserve: 0 2479 96026 96026 96026

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 DMA32 free:374736kB boost:0kB min:1004kB low:3504kB high:6004kB reserved_highatomic:0KB active_anon:431940kB inactive_anon:1716960kB active_file:520kB inactive _file:3632kB unevictable:0kB writepending:764kB present:3129176kB managed:2539352kB mlocked:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: lowmem_reserve: 0 0 93546 93546 93546

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 Normal free:183816kB boost:0kB min:38592kB low:134376kB high:230160kB reserved_highatomic:145408KB active_anon:53594000kB inactive_anon:40703828kB active_file: 35760kB inactive_file:45660kB unevictable:0kB writepending:20188kB present:97517568kB managed:95791924kB mlocked:0kB bounce:0kB free_pcp:0kB local_pcp:0kB free_cma:0kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: lowmem_reserve: 0 0 0 0 0

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 DMA: 04kB 08kB 016kB 032kB 064kB 0128kB 0256kB 0512kB 11024kB (U) 12048kB (M) 24096kB (M) = 11264kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 DMA32: 2734kB (UM) 3008kB (UME) 22016kB (UE) 17832kB (UE) 5464kB (UME) 39128kB (UME) 11256kB (UE) 8512kB (UE) 21024kB (UE) 42048kB (UME) 824096kB (U ME) = 374180kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 Normal: 27084kB (UME) 17868kB (UME) 45016kB (UME) 21232kB (UME) 8164kB (UME) 125128kB (UME) 52256kB (UME) 18512kB (UME) 111024kB (UME) 42048kB (UME) 20*4096kB (ME) = 184192kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=1048576kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: Node 0 hugepages_total=0 hugepages_free=0 hugepages_surp=0 hugepages_size=2048kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: 2084078 total pagecache pages

Nov 28 09:24:28 uk1-foremansvr1 kernel: 1120694 pages in swap cache

Nov 28 09:24:28 uk1-foremansvr1 kernel: Free swap = 236kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: Total swap = 8388604kB

Nov 28 09:24:28 uk1-foremansvr1 kernel: 25165684 pages RAM

Nov 28 09:24:28 uk1-foremansvr1 kernel: 0 pages HighMem/MovableOnly

Nov 28 09:24:28 uk1-foremansvr1 kernel: 579025 pages reserved

Nov 28 09:24:28 uk1-foremansvr1 kernel: 0 pages cma reserved

Nov 28 09:24:28 uk1-foremansvr1 kernel: 0 pages hwpoisoned

Nov 28 09:24:28 uk1-foremansvr1 kernel: Tasks state (memory values in pages):

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ pid ] uid tgid total_vm rss pgtables_bytes swapents oom_score_adj name

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 610] 0 610 49303 31690 434176 224 -250 systemd-journal

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 625] 0 625 9628 48 90112 832 -1000 systemd-udevd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 790] 32 790 3689 96 65536 192 0 rpcbind

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 791] 0 791 5795 72 65536 224 -1000 auditd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 824] 81 824 2732 132 61440 160 -900 dbus-broker-lau

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 825] 81 825 1424 187 49152 64 -900 dbus-broker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 826] 0 826 65044 320 135168 480 0 NetworkManager

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 830] 0 830 604275 1815 294912 477 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 831] 0 831 20693 64 61440 32 0 irqbalance

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 832] 996 832 679 96 45056 32 0 lsmd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 833] 0 833 708 32 40960 32 0 mcelog

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 834] 0 834 24433 128 69632 32 0 qemu-ga

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 835] 2 835 5011 128 73728 224 0 rngd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 838] 0 838 5705 437 86016 352 0 systemd-logind

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 849] 991 849 21215 13 73728 128 0 chronyd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 867] 0 867 364438 1483 225280 224 0 rapid7_agent_co

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 868] 0 868 530561 1204 249856 224 0 rapid7_endpoint

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 912] 0 912 2145 320 73728 128 0 check_mk_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 918] 982 918 4151 393 69632 160 0 cmk-agent-ctl

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 921] 985 921 57556 12904 385024 8415 0 smart-proxy

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 933] 0 933 2479 96 53248 64 0 oddjobd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 942] 984 942 46697 1501 409600 30560 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 947] 984 947 46411 2012 413696 29792 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 951] 0 951 4938 160 77824 384 -1000 sshd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 969] 91 969 2578366 321207 4743168 150299 0 java

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 976] 0 976 66624 276 159744 3264 0 tuned

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 977] 0 977 21316 320 163840 512 0 winbindd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 979] 0 979 14150 17 86016 224 0 gssproxy

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 985] 984 985 46393 3237 409600 28480 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 990] 984 990 46392 3499 413696 28288 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 992] 984 992 46392 3671 409600 28192 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 995] 984 995 46394 3378 417792 28384 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 999] 984 999 46392 2992 413696 28832 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1002] 984 1002 46393 3704 409600 28128 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1004] 984 1004 46392 3141 413696 28704 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1005] 984 1005 46392 2696 409600 29120 0 pulpcore-worker

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1009] 0 1009 54346 11109 335872 7072 0 puppet

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1011] 983 1011 1471776 224503 10358784 972768 0 redis-server

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1023] 0 1023 132857 5429 434176 205 0 rsyslogd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1027] 26 1027 1107313 18240 417792 416 -1000 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1064] 0 1064 6009 453 90112 448 0 httpd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1081] 0 1081 3287 160 61440 192 0 atd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1082] 0 1082 2147 192 57344 160 0 crond

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1090] 0 1090 764 64 45056 0 0 agetty

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1286] 26 1286 17541 435 106496 416 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1398] 0 1398 22791 160 172032 608 0 smbd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1400] 0 1400 21286 239 139264 544 0 wb[UK1-FOREMANS

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1404] 26 1404 1107429 356459 7417856 384 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1405] 26 1405 1107378 13483 294912 416 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1406] 26 1406 1107313 4267 151552 416 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1407] 26 1407 1107658 459 167936 416 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1408] 26 1408 17031 299 98304 416 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1409] 26 1409 1107582 427 147456 448 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1441] 0 1441 21795 239 163840 672 0 wb[ION]

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1460] 0 1460 21751 173 122880 640 0 smbd-notifyd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1461] 0 1461 21755 173 126976 640 0 smbd-cleanupd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1506] 998 1506 746133 441 258048 768 0 polkitd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1518] 0 1518 21627 310 151552 544 0 wb-idmap

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1542] 0 1542 11676 196 135168 4736 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 1748] 0 1748 59842 288 393216 2880 0 linux_agent_exe

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2630] 26 2630 1108756 13323 499712 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2631] 26 2631 1108701 12875 491520 512 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2632] 26 2632 1108756 13387 503808 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2633] 26 2633 1108708 12779 491520 512 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2634] 26 2634 1108708 12747 491520 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2635] 26 2635 1108701 11627 462848 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2636] 26 2636 1108708 12619 487424 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2637] 26 2637 1108738 13163 483328 480 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2638] 984 2638 83606 29395 425984 3008 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2641] 26 2641 1108862 14293 462848 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2735] 26 2735 1108716 3563 245760 736 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2994] 986 2994 241314 203388 1986560 17817 0 sidekiq

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2997] 986 2997 295081 239473 2326528 23809 0 sidekiq

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 2998] 986 2998 352592 313900 2842624 16517 0 sidekiq

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3000] 986 3000 199177 155395 1576960 16909 0 sidekiq

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3013] 986 3013 170122 83870 1339392 53871 0 sidekiq

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3078] 26 3078 1108779 7179 389120 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3079] 26 3079 1108772 10379 458752 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3080] 26 3080 1108760 8779 401408 512 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3458] 26 3458 1108470 3467 253952 640 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3462] 26 3462 1108733 32203 2248704 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3463] 26 3463 1108780 32698 2273280 608 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3472] 26 3472 1108725 33707 2314240 575 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3473] 26 3473 1108720 33195 2273280 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3474] 26 3474 1108762 33945 2252800 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3488] 26 3488 1108479 4075 258048 640 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3493] 26 3493 1108719 35243 2416640 640 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3497] 26 3497 1108471 3595 253952 608 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3501] 26 3501 1108736 35207 2428928 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3502] 26 3502 1108740 34315 2420736 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3506] 26 3506 1108704 45685 3805184 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3510] 26 3510 1108444 2315 237568 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3521] 26 3521 1108479 4011 258048 672 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3525] 26 3525 1108690 42059 3383296 640 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 3620] 26 3620 1108684 46329 3715072 640 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 5574] 26 5574 1108732 34316 2400256 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 19116] 26 19116 1108731 34380 2396160 608 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 19117] 26 19117 1108741 34092 2387968 576 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 24545] 26 24545 1108729 34732 2379776 544 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 60253] 26 60253 1108751 34476 2367488 512 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 82692] 26 82692 1108677 45256 3645440 556 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 82846] 26 82846 1108763 34700 2392064 620 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 82847] 26 82847 1108764 33132 2318336 588 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 82964] 26 82964 1108763 35052 2396160 556 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 399822] 26 399822 1108700 45774 3653632 554 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 629055] 0 629055 112326 381 212992 7840 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 744597] 0 744597 11676 236 131072 4736 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 773342] 26 773342 1108733 31374 1794048 554 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1011528] 0 1011528 145094 267 221184 7840 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1235742] 0 1235742 26857 948 225280 2848 0 s1-orchestrator

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1235745] 980 1235745 330572 4847 434176 5376 0 s1-network

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1235747] 0 1235747 80392 12584 442368 15008 0 s1-scanner

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1235749] 0 1235749 583654 92704 1839104 22368 0 s1-agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1235751] 0 1235751 47871 1408 163840 2880 0 s1-firewall

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1402873] 26 1402873 1108636 36526 2625536 522 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [1948521] 0 1948521 11676 1126 131072 3872 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2292207] 0 2292207 145094 1169 221184 6912 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2538915] 0 2538915 953062 11078 610304 2784 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2538922] 0 2538922 11676 2277 131072 2720 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2634562] 26 2634562 1108745 8493 425984 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2634563] 26 2634563 1108760 8301 438272 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [2798233] 0 2798233 145094 7157 225280 992 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277696] 984 3277696 83621 8889 417792 23350 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277705] 984 3277705 83621 8889 417792 23318 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277707] 984 3277707 83621 8889 417792 23318 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277780] 26 3277780 1108864 13755 446464 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277784] 26 3277784 1108864 14479 450560 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3277790] 26 3277790 1108864 14047 454656 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3707404] 984 3707404 83621 8854 421888 23385 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [3707678] 26 3707678 1108863 15754 458752 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052377] 984 4052377 83621 8854 417792 23321 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052379] 984 4052379 83621 8822 413696 23321 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052384] 26 4052384 1108864 14837 442368 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052385] 26 4052385 1108864 14726 454656 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052386] 984 4052386 83621 8854 417792 23385 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052395] 984 4052395 83621 8895 413696 23321 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052399] 26 4052399 1108864 15398 462848 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052400] 984 4052400 102058 8822 425984 23353 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052401] 984 4052401 83621 8854 417792 23417 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052405] 26 4052405 1108864 14838 450560 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052407] 26 4052407 1108864 15566 458752 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052410] 984 4052410 83621 8834 413696 23331 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052412] 26 4052412 1108864 14926 462848 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052414] 984 4052414 83621 8822 417792 23385 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052416] 26 4052416 1108864 14411 454656 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052425] 26 4052425 1108864 15986 454656 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052429] 984 4052429 83621 8822 417792 23385 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052434] 984 4052434 83621 8854 413696 23353 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052435] 26 4052435 1108864 15941 462848 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052437] 984 4052437 83621 8911 417792 23385 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052438] 26 4052438 1108864 15908 458752 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4052441] 26 4052441 1108864 16306 458752 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4137798] 0 4137798 5439 672 94208 0 0 sshd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4137802] 0 4137802 6257 990 90112 0 100 systemd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4137804] 0 4137804 44726 1300 118784 645 100 (sd-pam)

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4137811] 0 4137811 5438 627 86016 64 0 sshd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [4137812] 0 4137812 2214 544 69632 0 0 bash

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 139751] 986 139751 129937 98216 950272 0 0 rails

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 140200] 26 140200 1108786 6196 286720 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 181416] 0 181416 1382076 10755 417792 0 0 rapid7_velocira

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217233] 986 217233 8774797 8723834 70238208 0 0 rails

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217530] 26 217530 1108471 3405 253952 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217540] 26 217540 1108472 4205 307200 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217542] 26 217542 1108530 4557 331776 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217544] 26 217544 1108720 7362 311296 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217901] 986 217901 8752815 8668382 71086080 0 0 rails

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217907] 26 217907 1108471 3373 253952 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217910] 26 217910 1108700 10061 598016 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217911] 26 217911 1108693 10221 593920 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217913] 26 217913 1108714 7363 311296 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217932] 984 217932 83621 8756 417792 23355 0 pulpcore-conten

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217933] 26 217933 1108753 10476 557056 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217937] 26 217937 1108749 5518 278528 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217944] 26 217944 1108864 14345 471040 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217952] 986 217952 2686261 2620396 21569536 0 0 rails

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217960] 26 217960 1108471 3373 253952 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217964] 26 217964 1108694 4589 335872 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 217965] 26 217965 1108720 7394 311296 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 230644] 26 230644 1110029 75962 2899968 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 259324] 26 259324 1109362 61549 2416640 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 281972] 26 281972 1109257 20427 1130496 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286739] 984 286739 46730 6984 393216 25166 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286740] 984 286740 46730 6920 393216 25198 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286741] 984 286741 46730 6984 393216 25134 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286742] 984 286742 46730 6920 393216 25198 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286743] 984 286743 46730 6920 393216 25230 0 pulpcore-api

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286755] 0 286755 98962 2744 516096 2510 0 linux_agent_exe

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 286936] 986 286936 145070 120486 1183744 0 0 rails

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287229] 26 287229 1108495 6765 360448 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287237] 26 287237 1108495 6701 360448 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287244] 26 287244 1108495 6797 360448 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287249] 26 287249 1108495 6765 360448 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287250] 26 287250 1108495 6765 360448 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287314] 26 287314 1108471 3373 253952 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287318] 26 287318 1108562 4301 315392 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287319] 26 287319 1108465 4141 311296 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 287320] 26 287320 1108714 7395 311296 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 288156] 0 288156 107388 7973 192512 0 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 288626] 0 288626 242798 8143 278528 0 0 ir_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 290705] 26 290705 1109781 57533 2162688 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 290706] 48 290706 616432 3355 372736 412 0 httpd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 290707] 48 290707 616432 3579 372736 412 0 httpd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 293636] 26 293636 1113804 76256 2580480 331 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 315228] 26 315228 1109360 28720 1400832 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 316737] 26 316737 1108947 20042 1011712 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 317109] 26 317109 1110773 49192 2502656 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 318161] 26 318161 1109133 22669 1056768 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319129] 26 319129 1110517 36885 2138112 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319130] 26 319130 1110507 31045 1814528 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319577] 26 319577 1109098 9812 544768 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319578] 26 319578 1109905 26572 1241088 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319579] 26 319579 1108655 6413 389120 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 319580] 26 319580 1112991 40890 1597440 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321474] 26 321474 1110511 14043 786432 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321475] 26 321475 1110768 14344 761856 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321498] 26 321498 1108655 3885 253952 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321505] 26 321505 1108655 3917 253952 363 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321517] 0 321517 6009 315 81920 508 0 httpd

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321534] 26 321534 1108455 1965 233472 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321545] 26 321545 1108610 2605 241664 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321555] 983 321555 1471810 248363 10342400 971238 0 redis-server

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321558] 26 321558 1107635 589 180224 395 0 postmaster

Nov 28 09:24:28 uk1-foremansvr1 kernel: [ 321560] 0 321560 2145 219 65536 123 0 check_mk_agent

Nov 28 09:24:28 uk1-foremansvr1 kernel: oom-kill:constraint=CONSTRAINT_NONE,nodemask=(null),cpuset=/,mems_allowed=0,global_oom,task_memcg=/system.slice/foreman.service,task=rails,pid=217233,uid=986

Nov 28 09:24:28 uk1-foremansvr1 kernel: Out of memory: Killed process 217233 (rails) total-vm:35099188kB, anon-rss:34895336kB, file-rss:0kB, shmem-rss:0kB, UID:986 pgtables:68592kB oom_score_adj:0