Thanks, @ekohl! This is helpful!

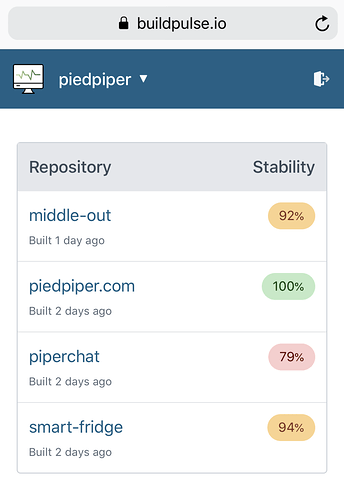

When I open the app, I see 2 organizations: Katello and theforeman. However, when I click through Katello is empty and in theforeman I see one repository.

Thanks for pointing this out! Currently, BuildPulse is installed on theforeman organization with access to one repository (theforeman/foreman) and on the Katello organization with access to one repository (Katello/katello). With that in mind, it makes sense to me that you see one repository under theforeman organization, but I would have expected you to also see one repository under the Katello organization.

BuildPulse (and all GitHub apps) use this API endpoint to determine which repositories a user can access. The endpoint returns “repositories that the authenticated user has explicit permission (:read, :write, or :admin) to access for an installation.” When fetching the list of accessible repositories for your user account for the Katello installation, the API returns an empty array.  From what I see so far, I think your account might be lacking explicit permission to Katello/katello. You at least have implicit read access to it, because it’s a public repository. To help figure out whether I’m interpreting this situation correctly, are you able to see what permissions you have for Katello/katello on GitHub?

From what I see so far, I think your account might be lacking explicit permission to Katello/katello. You at least have implicit read access to it, because it’s a public repository. To help figure out whether I’m interpreting this situation correctly, are you able to see what permissions you have for Katello/katello on GitHub?

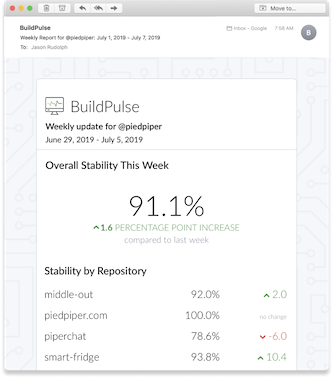

The pages feel very empty and perhaps this can be slightly optimized by merging it into one (low priority).

You’re right: These pages are so barebones right now.  Providing a richer list view is definitely something I want to do.

Providing a richer list view is definitely something I want to do.

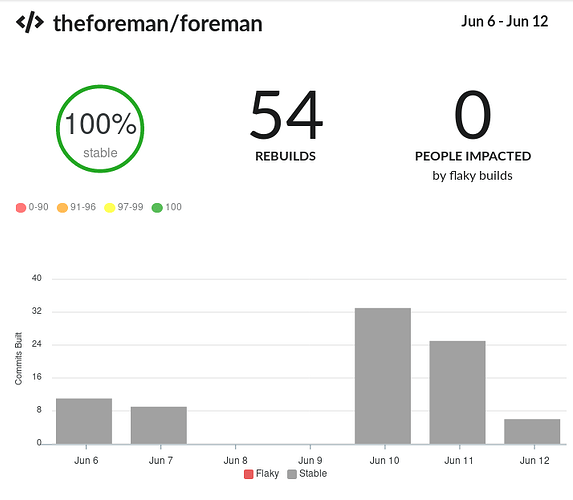

The Foreman repository now has 100% stable builds (see below) and that means I have no controls that do anything. Perhaps the organization / repository part could be links? I also wondered about other dates so perhaps it needs a date picker if that data is available?

Congratulations on 100% stable builds!

I agree that it would help for the page to provide more interactivity, and selecting various date ranges seems really useful to me.

I’ll also add some repositories so there’s a bit more data.

Cool! So far, I’ve focused on the experience for a repository that already has at least a week’s worth of flaky build analysis, and I haven’t yet focused on the “blank slate” experience. When you add more repositories, BuildPulse will need to monitor commit statuses for a few days before you’ll start seeing a useful UI. So at first, you’ll see a quite underwhelming blank slate, but after about a week, you should have a richer UI like you currently see for theforeman/foreman.

Longer term I wonder if it’s possible to dive into test results. … it’d be great to automatically identify specific flaky tests.

I agree wholeheartedly! I think that would make a big impact for teams that are battling flaky tests.