Problem: 1. After backup restore to new RHEL-9 foreman-katello server, seems /var/lib/foreman-proxy/ssh/ have old forman-katello servers key (not updated with new servers detail)

2. New OS installation via foreman PXE, after OS install when ansible role is running it get failed because of ssh key permission issue.

3. how to update new foreman details in hosts which is listing in Hosts > All Hosts

Expected outcome:

Foreman and Proxy versions: 3.12.1 & 3.12.1

Katello versions: 4.14

Foreman and Proxy plugin versions: 3.12.1

Distribution and version: RHEL - 9.7

Other relevant data:

Explaination:

I have old setup of foreman-katello (3.12-4.14) environment on RHEL-8.10, due to RHEL-8 I am not able to upgrade Foreman-katello to higher version because OS restriction, So I build new RHEL-9.7 server and install same foreman-3.12.1 and katello-4.14 version with same plugins. then I create backup without pulp data in old foreman and transfer backup to new foreman and shutdown old foreman server, then I change hostname of new foreman to old foreman name and restore backup, after successful restore I have revert hostname to new hostname of new foreman server then I run katello-hostname-change command on new foreman server then run this command “foreman-installer --foreman-proxy-foreman-base-url https://<new_hostname> --foreman-proxy-trusted-hosts <new_hostname> --puppet-server-foreman-url https://<new_hostname>“ after that update host in hammer file “/etc/hammer/cli.modules.d/foreman.yml”.

Now my foreman-katello setup is working fine, but inforeman -proxy directory /var/lib/foreman-proxy/ssh/ having old foreman keys. So I delete all keys and regenerated via command “sudo -u foreman-proxy ssh-keygen -t rsa -b 4096 -f /var/lib/foreman-proxy/ssh/id_rsa_foreman_proxy -N ““ “

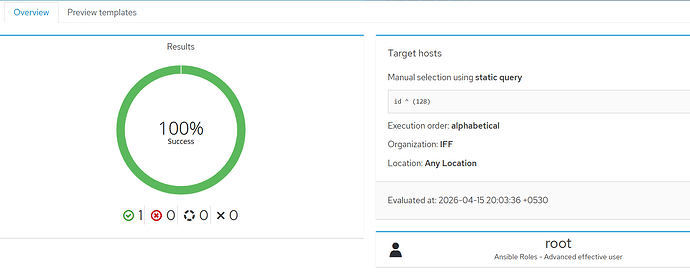

then I put the public key in file /root/.ssh/authorozed_keys inside same foreman server for testing, then I run the self job in foreman so it was successful.

But When I am doing new OS installation from new foreman server, so OS installation completing without any issue but ansible role get failed because of foreman ssh key not copied on newly OS installed server.

1:

[DEPRECATION WARNING]: ANSIBLE_CALLBACK_WHITELIST option, normalizing names to

2:

new standard, use ANSIBLE_CALLBACKS_ENABLED instead. This feature will be

3:

removed from ansible-core in version 2.15. Deprecation warnings can be disabled

4:

by setting deprecation_warnings=False in ansible.cfg.

5:

6:

PLAY [all] *********************************************************************

7:

8:

TASK [Gathering Facts] *********************************************************

9:

fatal: [Client_name.com]: UNREACHABLE! => {“changed”: false, “msg”: “Failed to connect to the host via ssh: Warning: Permanently added ‘10.101.62.27’ (ED25519) to the list of known hosts.\r\nroot@xx.xx.xx.xx: Permission denied (publickey,gssapi-keyex,gssapi-with-mic,password).”, “unreachable”: true}

10:

PLAY RECAP *********************************************************************

11:

Client_name.com : ok=0 changed=0 unreachable=1 failed=0 skipped=0 rescued=0 ignored=0

12:

Exit status: 1

13:

StandardError: Job execution failed

I tried to create global parameter “remote_execution_ssh_keys String paste ssh public key“ but not work. it means ssh keys not copied on host.

After backup restore on new foreman I can see all the hosts (1000+ hosts) in new foreman (hosts > all hosts) which is available in old foreman, but I can’t able to run the job because hosts are not registered with new foreman, they are registered with old foreman servers, how can I update my new foreman details into all hosts so that I can able to manage all hosts from new foreman. is there any specific step do I have to follow or did I missed anything.